Robots.txt File

Fundamentals

On-Site SEO

On-Page Ranking Factors

Title Tag

Meta Description

Alt Text

Duplicate Content

Robots.txt

Robots Meta Directives

Schema.org Markup

HTTP Status Codes

Page Speed

Conversion Rate Optimization

Domains

URLs

Canonicalization

Redirects

Related Resources

Broaden your SEO Knowledge:

Real Robots.txt

The sitemap provides a structure for your entire website. The robots.txt then informs search engines which pages they should crawl. It is a root file on your site that helps search engines avoid blocked pages to display.

That said, the robots.tx does not secure information. For this, you might need to make a page private or even password-protect the page to ensure Google does not crawl them.

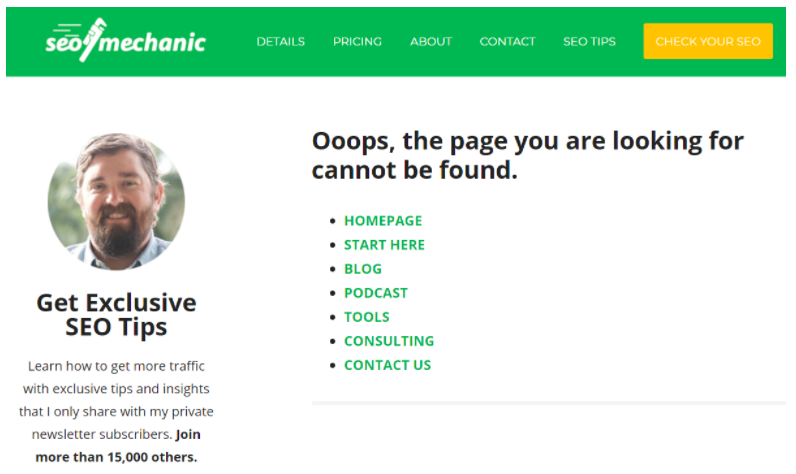

Also, when you use Robots.Txt, you often direct users to pages that are no longer there. If that is true, you need to create a custom 404 Error Page re-directing users to another website page.

Meet The Team

Meet The Team